PROMPT ENGINEERING

Demystified: what actually matters when talking to AI, and what doesn't. The 90/10 rule, meta-prompting, context engineering, and case studies.

The Era of 'Magic Words' Is Over

Model reasoning capability has surged from roughly 15% in 2022 to 95% in 2026, while the need for syntax precision has plummeted from 85% to 10% over the same period. The crossover point was 2024: context now matters more than syntax.

Low-leverage work (wording perfectionism) yields diminishing returns. High-leverage work (defining the role and constraints) drives the alpha.

The timeline: 2022 GPT-3.5 Era, 2023 GPT-4 Launch, 2024 Multi-modal, 2025 Reasoning Models, 2026 Agentic AI. Each generation has made rigid prompt syntax less important and clear thinking more important.

Source: MIT/Columbia Study on Generative AI Adoption, 2024

What All Five LLMs Agreed On

Tested across Claude, ChatGPT, Gemini, Grok, Copilot, and MANUS — each model arrived at the same conclusions independently:

1. Rigid prompt engineering is overrated for daily knowledge work

2. Context and clarity matter far more than syntax and formatting

3. Meta-prompting is a valid, efficient, and endorsed method

4. Complex analytical tasks still benefit from detailed prompts

5. Routine tasks (emails, summaries) need minimal prompting

The consensus was not manufactured. Each model was asked independently whether prompt engineering is still necessary, and all converged on the same answer.

The Shift: From Prompt to Context Engineering

The quality of your output depends on the clarity of your thinking, not the precision of your wording.

Prompt engineering is not a hidden knowledge base or a paid Udemy course. It is simply you talking to AI, explaining with your own cadence, knowledge, and context what you need.

OLD THINKING (outdated): Memorise exact syntax, copy-paste prompt templates for routine tasks, trick the model with hacks, focus on wording precision, invest time in prompt engineering courses.

NEW REALITY: The best way to speak to AI is to speak to AI. Use dictation where possible. Define the role clearly. Provide relevant context. Set constraints and guardrails. Iterate based on output.

The 90/10 Rule of Thumb

90% OF TASKS: Just talk naturally. No special syntax needed. Be clear, be specific, iterate. This covers emails and correspondence, meeting summaries, first-draft documents, research summaries, and data formatting.

10% OF TASKS: Invest in the prompt. Complex, high-stakes work where structure drives quality. This includes DCF and financial models, complex multi-step analysis, regulatory compliance documents, client-facing proposals, and risk assessment frameworks.

The decision is simple: is this a routine task? Just talk naturally. Is the output high-stakes? Structure the prompt. Not sure? Start simple, add structure if needed.

Your Secret Weapon: Meta-Prompting

Let the AI write the prompt for you. Seriously.

Step 1: Describe your goal — tell the AI what you're trying to accomplish in plain language.

Step 2: Ask it to write the prompt — 'Write me a detailed prompt that will produce [desired output].'

Step 3: Review and refine — the AI generates a structured prompt. You adjust what matters.

Step 4: Execute the prompt — feed the refined prompt back to the AI for the actual task.

Why it works: The AI already knows prompt best practices. You just need to tell it what you want, not how to ask for it. This is especially useful when you use one LLM to write the prompt and then take it to another LLM to execute.

Deconstructing the Master Prompt

The anatomy of a well-structured prompt for the 10% of tasks that need it:

ROLE: You are a senior equity analyst at Anchor Capital specializing in SA-listed equities.

CONTEXT: The client holds a balanced portfolio (60/40 equity/bond) with a 5-year investment horizon.

TASK: Analyze [Company X] and produce a recommendation including valuation, risks, and catalyst timeline.

FORMAT: Output as a structured report with sections: Executive Summary | Valuation | Risks | Recommendation.

CONSTRAINTS: Use only publicly available data. Do not hallucinate financial figures.

Each section is a lever — adjust them based on the complexity of your task. You don't need all five every time. For simple tasks, just the task is enough.

The SamurAI Rule: Intent > Engineering

If you can explain your thinking to a junior analyst — or an intern — you can explain it to an LLM.

The checklist is the same as delegating to a human:

- Did you give them the background context?

- Did you define the output format?

- Did you set the boundaries?

Personally, I use the dictation function. Between screenshots and voice, it's 95% of my inputs. The only time I really type is for short things like 'grammar check' with an attached document, or a two-word reply with a screenshot. The skill is clear thinking, not prompt syntax.

Case Study: The 90% — Email Drafting

Three approaches compared side by side:

TOO LAZY: 'Write me an email.' Result: Generic, unusable output. No context, no tone, no structure. Requires a complete rewrite.

OVER-ENGINEERED: A long detailed prompt specifying AIDA framework, Flesch-Kincaid readability scores, and elaborate formatting rules. Result: 10 minutes crafting the prompt. Output quality identical to the sweet spot. Net time wasted.

SWEET SPOT: 'Draft a follow-up email to John at ABC Corp about our meeting yesterday. Tone: professional but warm. Key points: thank him for his time, confirm next steps (due diligence documents to be sent by Friday), and suggest a call next Tuesday to discuss.' Result: 30 seconds to write. Ready to send with minor tweaks. Same quality as the over-engineered version.

Case Study: The 10% — DCF Analysis

This is where prompt investment pays off because the complexity demands it.

LAZY PROMPT: 'Do a DCF for Naspers.' What you get: Generic assumptions, no source citations, hallucinated financial figures, unusable for client work.

ENGINEERED PROMPT: Full Role/Task/Assumptions/Output format/Constraints — specifying the analyst role, the company context, the required methodology (FCFF, WACC assumptions, terminal growth rate), the output format (sensitivity matrix), and the constraints (publicly available data only, cite all sources).

WHAT YOU GET: A sensitivity matrix showing fair value across WACC and terminal growth rate scenarios. Fair Value R3,840/share, Current Price R3,250/share, Implied Upside +18.2%.

The 10% is where structured prompting delivers real alpha.

When to Invest in the Prompt

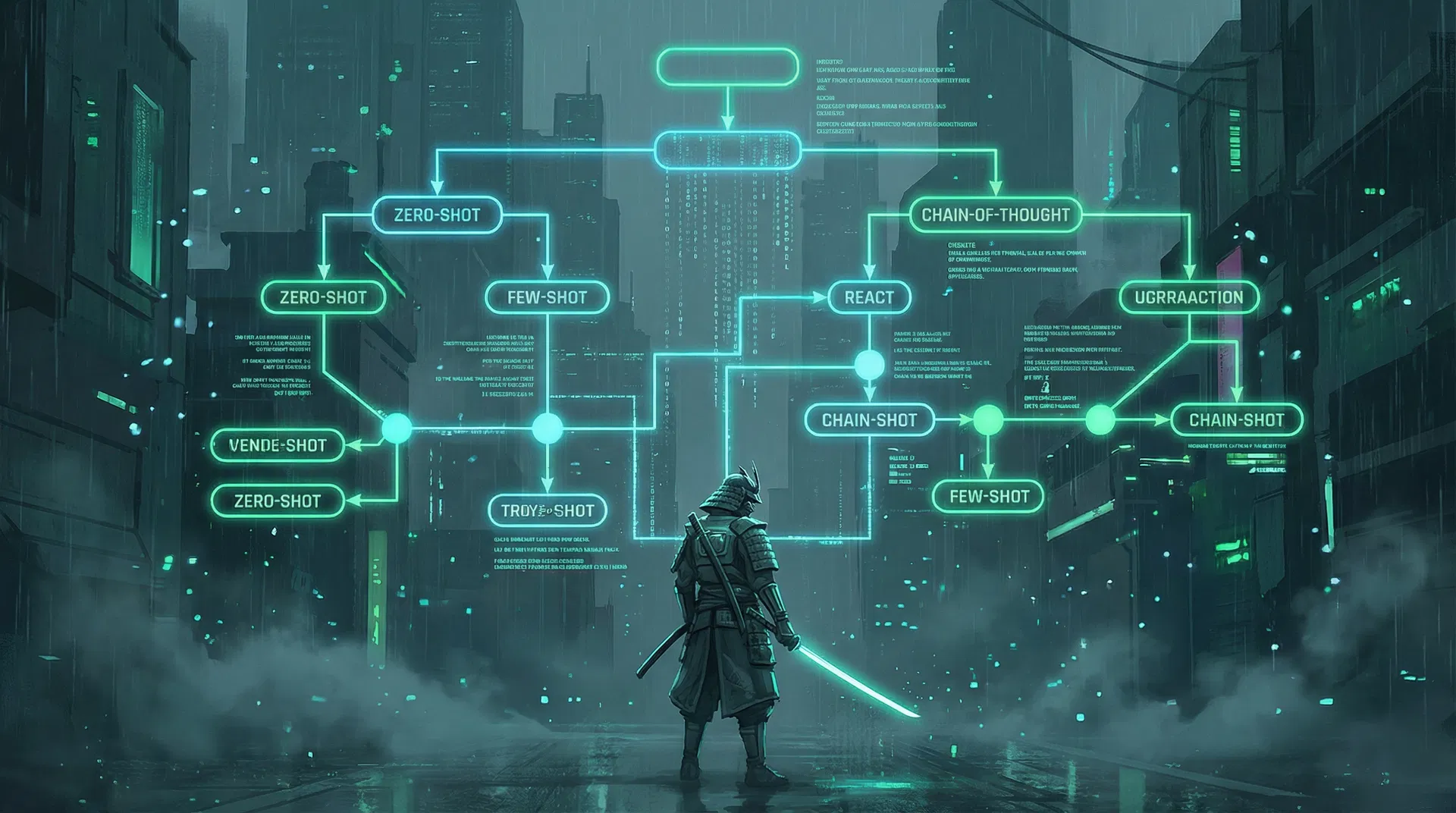

A simple decision tree:

Is this a routine task? YES → Just talk naturally (90%) → Done.

Is this a routine task? NO → Is the output high-stakes? YES → Structure the prompt (10%) → Done.

Is this a routine task? NO → Is the output high-stakes? NO → Start simple, add structure if needed → Done.

The decision is simple. Don't over-think it.

The Bottom Line

Stop optimizing prompts. Start optimizing thinking.

1. Talk naturally — the AI understands you. Stop trying to speak 'AI language'. There is no AI language.

2. Invest where it matters — save structured prompts for the 10% of tasks that actually need them.

3. Use meta-prompting — let the AI help you write better prompts. It's literally what it's designed to do.

The best prompt engineers aren't the ones who memorize syntax. They're the ones who think clearly about what they need.

As one LLM put it during the research: 'That's not the point. The point is that you gave me clear instructions, you laid out the scenario, you told me what you wanted — that's the actual prompt. I can go do the structuring and the syntax.'